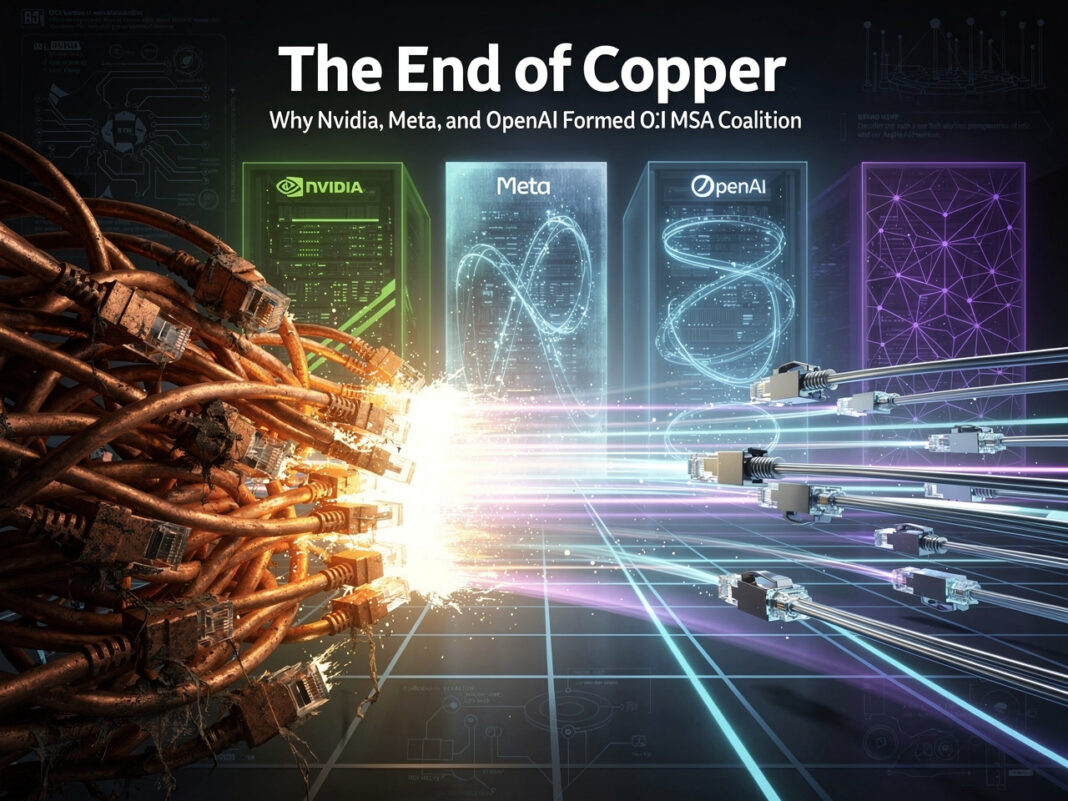

The AI industry has hit a physical wall. As GPUs become exponentially more powerful, the “copper wires” connecting them have become the ultimate bottleneck. To solve this, industry titans AMD, Broadcom, Meta, Microsoft, Nvidia, and OpenAI have announced the Optical Compute Interconnect Multi-Source Agreement (OCI MSA).

This consortium aims to create an open standard for high-speed optical interconnects, moving the industry from electrical signals to light-based data transfer.

Why This is a “Shifting Point” for AI Infrastructure

1. Breaking the I/O Bottleneck

Modern GPUs can process data faster than traditional copper cables can deliver it. This is known as the I/O Bottleneck. Optical interconnects allow for terabits of data transfer with near-zero latency, ensuring that GPUs are never “starving” for data.

2. Radical Energy Efficiency

Copper generates heat due to electrical resistance. At the scale of a 100,000-GPU cluster, the energy wasted on heat is staggering. Photons (light) do not heat the medium, making optical connections 50-70% more energy-efficient per bit than copper over distance.

3. Market Democratization (Anti-Lock-in)

By forming an MSA (Multi-Source Agreement), the industry ensures that a Broadcom optical component will work seamlessly with an Nvidia or AMD chip. This prevents “vendor lock-in” and drives down the Total Cost of Ownership (TCO) for data center operators like Meta and Microsoft.

Comparison: Proprietary NVLink vs. Open OCI MSA

| Feature | Current Standard (NVLink 5.0) | Future OCI MSA (Optical) |

| Physical Medium | Copper (DAC/ACC Cables) | Silicon Photonics (Fiber) |

| Transmission Distance | Limited (< 2-3 meters) | Hundreds of meters |

| Energy Efficiency | High consumption (thermal loss) | 50-70% more efficient |

| Ecosystem | Closed (Nvidia Only) | Open (Multi-vendor) |

| Architecture | Tight clusters (Scale-up) | Distributed “Planetary” Clusters |

The Secret Weapon: Co-packaged Optics (CPO)

The most significant engineering shift in the OCI standard is Co-packaged Optics. Instead of having a separate transceiver on the motherboard, the optical engine is integrated directly into the GPU/CPU package. Light enters the chip directly, bypassing several conversion steps, which radically slashes latency ($latency$).

What This Means for the Future of AI (GPT-5 and Beyond)

Previously, developers were limited by the size of a single “supercomputer in a box” (like Nvidia’s DGX). With OCI, an entire data center functions as one giant GPU.

This paves the way for training models with tens of trillions of parameters. As OpenAI pushes toward more advanced video generation and reasoning models, the “optical fabric” provided by OCI MSA will be the nervous system that makes it possible.

Technical Glossary: The Future of AI Networking

-

OCI MSA (Optical Compute Interconnect Multi-Source Agreement): An industry-standard group (including Nvidia, Meta, and Microsoft) focused on creating a unified, interoperable specification for optical links. This ensures that hardware from different manufacturers can communicate without proprietary barriers.

-

Silicon Photonics: A technology that brings the power of fiber optics (light) directly into silicon chips. It uses light-emitting lasers and detectors instead of electrical signals to move data, allowing for much higher speeds and lower energy loss.

-

CPO (Co-packaged Optics): A revolutionary packaging method where optical engines are placed directly inside the same “case” (package) as the GPU or CPU. This shortens the distance data travels from centimeters to millimeters, drastically reducing latency and power.

-

SerDes (Serializer/Deserializer): A pair of functional blocks used in high-speed communications to convert data between serial and parallel forms. In the context of OCI, optical links replace traditional copper-based SerDes to overcome the physical limits of electricity.

-

I/O Bottleneck: A situation where the processing power of a GPU is so high that the input/output (I/O) systems cannot feed it data fast enough. This leads to “GPU starvation,” where expensive chips sit idle waiting for information.

-

Latency ($latency$): The time delay between a data request and its delivery. In large-scale AI training (like GPT-5), even a few nanoseconds of delay across thousands of GPUs can add weeks to the total training time.

-

NVLink: A proprietary high-speed interconnect developed by Nvidia. While highly efficient, it is a “closed” system, whereas the new OCI MSA is an “open” standard designed for a multi-vendor ecosystem.